- Blog

- New Page

- New Page

- Divinity Original Sin New Game Plus

- Download Ronyasoft Cd Dvd Label Maker Torrent

- Dragon Ball Z Icon

- How To Delete Skype Account

- Fallout 3 Companions List

- Mods For Playstation 3

- Sims 4 Playable Pets Mod Download

- Medieval Kingdoms Total War 1212

- Harris Giant Free Download

- Wow How To Move Action Bars

- Baixar Serial Bolide Movie Creator 4.0 Build 1142

- Download Driver Epson T13x Windows 10 64 Bit

- Crusader Kings 2 Ireland

- Punch Quest Ios Ifile Hack

- Kaplan Usmle Question Bank Free Download

- Modern Warfare 3 Frost

- Download Lego Star Wars Force Awakens Fshare

- Spark Df, Flatten Df

- What Is A Curse Account

- The Last Days Warband

- Hack Mu Online 2018

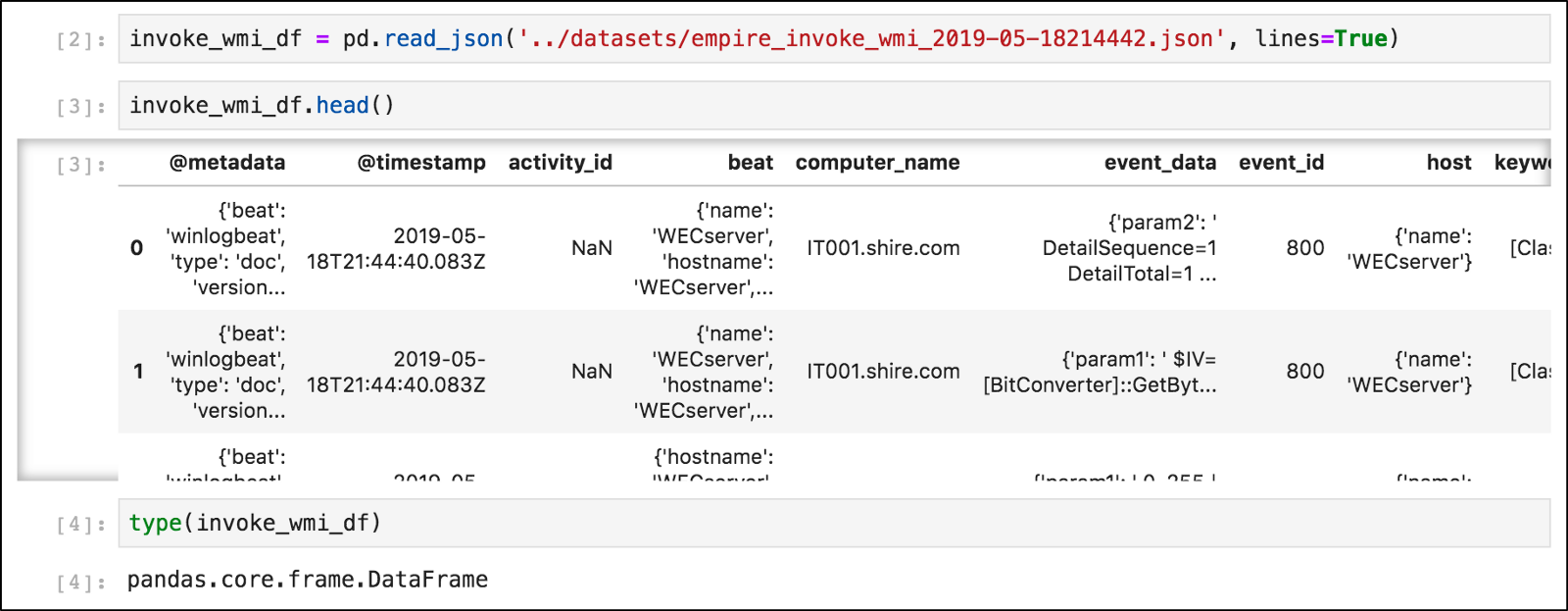

I would like to flatten JSON blobs into a Data Frame using Spark/Spark SQl inside Spark-Shell.

val df = spark.sql('select body from test limit 3'); // body is a json encoded blob column

val df2 = df.select(df('body').cast(StringType).as('body'))

when I do

df2.show // shows the 3 rows

Download driver epson t13x windows 10 64 bit. Latest version is 2.50 and file size of package is 36.8 MB.Released / Updated: Oct 28, 2016Version: 2.50File size: 36.8 MB.File name: L380x86250FD.exeCompatible OS: Windows 10 32-bit, Windows 8.1 32-bit, Windows 8 32-bit, Windows 7 32-bit, Windows XP 32-bit, Windows Vista 32-bit Epson L380 Driver Windows 10:Epson L380 Scanner Driver: Latest Epson L380 printer driver package is updated on Aug 2017. Latest version is 6.2.3.0 and file size of package is 25.5 MB.Released / Updated: Aug 2017Version: 6.2.3.0File size: 25.5 MB.File name: L380EScan26230FD.exeCompatible OS: Windows 10 64-bit, Windows 8.1 32-bit, Windows 8.1 64-bit, Windows 8 32-bit, Windows 8 64-bit, Windows 7 32-bit, Windows 7 64-bit, Windows XP 32-bit, Windows XP 64-bit, Windows Vista 32-bit, Windows Vista 64-bitEpson L380 Driver Windows 10. From this page you can download Epson L380 Printer Driver directly from the official site of Epson without any popup or redirects to other sites.Epson L380 Driver Release Details:Epson L380 Windows 64-bit Printer Driver: Latest Epson L380 printer driver package is updated on Oct 2016. Epson L380 Driver: Download Epson L380 series printer drivers for machine running on Windows operating system. You may need this driver / software to install and use your Epson L380 Printer.Here at Windows10Drivers.com we provide free and official drivers from official site of your device manufacturer. Latest version is 2.50 and file size of package is 39.5 MB.Released / Updated: Oct 28, 2016Version: 2.50File size: 39.5 MB.File name: L380x64250FD.exeCompatible OS: Windows 10 64-bit, Windows 8.1 64-bit, Windows 8 64-bit, Windows 7 64-bit, Windows XP 64-bit, Windows Vista 64-bitEpson L380 Driver Windows 10:Epson L380 Windows 32-bit Printer Driver: Latest Epson L380 printer driver package is updated on Oct 2016.

body

{'k1': 'v1', 'k2': 'v2' }

{'k3': 'v3'}

{'k4': 'v4', 'k5': 'v5', 'k6': 'v6'}

Now say I have billion of these rows/records but at most there will 5 different json schemas for all billion rows. Now how do I flatten such that I get a data frame in the format below? Should I use df.forEach or df.forEachPartition or df.explode or df.flatMap? How do I make sure I am not creating a billion data frames and trying to union all of them or something more inefficient. It will be great if I could see a code sample. Also since this might have Nil I wonder if they would take up any space? I initially tried it with UDF's and I keep getting some exception saying 'Any' Type is not supported and so on. So an example on how to do this would help!

'K1' | 'K2' | 'K3' | 'K4' | 'K5' | 'K6'

'V1' | 'V2' || 'V3' || 'V4' | 'V5' | 'V6'Needless to say, V1, V2, V3..V6 can be any type (String, boolean, integer, Map..). Also open to any new ideas.

This seem to work

However I wonder if this any inefficiency here ? since I have to apply this function for billion rows.

Michael ArmbrustNov 21 (1 day ago)

to kant, userIn Spark 2.1 we've added a from_json function that I think will do what you want.

I have a dataframe with the following structure:

How it is possible to flatten the structure and create a new dataframe:

Is there something like explode, but for structs?

user691041137.7k1010 gold badges9797 silver badges122122 bronze badges

djWanndjWann62022 gold badges1313 silver badges2929 bronze badges

5 Answers

Here is function that is doing what you want and that can deal with multiple nested columns containing columns with same name:

Before:

After:

stecosteco

I generalized the solution from stecos a bit more so the flattening can be done on more than two struct layers deep:

just call with:

(second parameter is the level of layers to be flattened, in my case it's 3)

Aydin K.Aydin K.

An easy way is to use SQL, you could build a SQL query string to alias nested column as flat ones.

- Retrieve>11 gold badge2424 silver badges3535 bronze badges

13.9k22 gold badges6262 silver badges6666 bronze badges

This

flatten_df version flattens the dataframe at every layer level, using a stack to avoid recursive calls:Example:

Prints:

federicojassonfedericojasson

Not the answer you're looking for? Browse other questions tagged javaapache-sparkapache-spark-sql or ask your own question.

All,

Is there an elegant and accepted way to flatten a Spark SQL table (Parquet) with columns that are of nested

StructTypeFor example

If my schema is:

How do I select it into a flattened tabular form without resorting to manually running

In other words, how do I obtain the result of the above code programmatically given just a

echenechenStructType and a DataFrame83511 gold badge1111 silver badges2020 bronze badges

9 Answers

The short answer is, there's no 'accepted' way to do this, but you can do it very elegantly with a recursive function that generates your

select(..) statement by walking through the DataFrame.schema.The recursive function should return an

Array[Column]. Every time the function hits a StructType, it would call itself and append the returned Array[Column] to its own Array[Column].Something like:

You would then use it like this:

David GriffinDavid Griffin9,44744 gold badges3232 silver badges5858 bronze badges

I am improving my previous answer and offering a solution to my own problem stated in the comments of the accepted answer.

Spark Df Flatten Df Chart

This accepted solution creates an array of Column objects and uses it to select these columns. In Spark, if you have a nested DataFrame, you can select the child column like this:

df.select('Parent.Child') and this returns a DataFrame with the values of the child column and is named Child. But if you have identical names for attributes of different parent structures, you lose the info about the parent and may end up with identical column names and cannot access them by name anymore as they are unambiguous. This was my problem.

I found a solution to my problem, maybe it can help someone else as well. I called the

flattenSchema separately: and this returned an Array of Column objects. Instead of using this in the

select(), which would return a DataFrame with columns named by the child of the last level, I mapped the original column names to themselves as strings, then after selecting Parent.Child column, it renames it as Parent.Child instead of Child (I also replaced dots with underscores for my convenience):And then you can use the select function as shown in the original answer:

V. SammaV. Samma1,22322 gold badges1717 silver badges2525 bronze badges

Just wanted to share my solution for Pyspark - it's more or less a translation of @David Griffin's solution, so it supports any level of nested objects.

Steve Ng68411 gold badge99 silver badges3030 bronze badges

Evan VEvan V

You could also use SQL to select columns as flat.

- Get original>22 gold badges6262 silver badges6666 bronze badges

I added a

DataFrame#flattenSchema method to the open source spark-daria project.Here's how you can use the function with your code.

You can also specify different column name delimiters with the

flattenSchema() method.This delimiter parameter is surprisingly important. If you're flattening your schema to load the table in Redshift, you won't be able to use periods as the delimiter.

Here's the full code snippet to generate this output.

The underlying code is similar to David Griffin's code (in case you don't want to add the spark-daria dependency to your project).

PowersPowers6,48744 gold badges2828 silver badges5050 bronze badges

To combine David Griffen and V. Samma answers, you could just do this to flatten while avoiding duplicate column names:

Spark Df Flatten Df 10

swdevswdev

I have been using one liners which result in a flattened schema with 5 columns of bar, baz, x, y, z:

As for

explode: I typically reserve explode for flattening a list. For example if you have a column idList that is a list of Strings, you could do:That will result in a new Dataframe with a column named

flattenedId (no longer a list)Kei-venKei-ven

Here is a function that is doing what you want and that can deal with multiple nested columns containing columns with same name, with a prefix:

Before:

After:

stecosteco

This is a modification of the solution but it uses tailrec notation

fhuertasfhuertas1,47622 gold badges1111 silver badges2424 bronze badges

Not the answer you're looking for? Browse other questions tagged scalaapache-sparkapache-spark-sql or ask your own question.

- Blog

- New Page

- New Page

- Divinity Original Sin New Game Plus

- Download Ronyasoft Cd Dvd Label Maker Torrent

- Dragon Ball Z Icon

- How To Delete Skype Account

- Fallout 3 Companions List

- Mods For Playstation 3

- Sims 4 Playable Pets Mod Download

- Medieval Kingdoms Total War 1212

- Harris Giant Free Download

- Wow How To Move Action Bars

- Baixar Serial Bolide Movie Creator 4.0 Build 1142

- Download Driver Epson T13x Windows 10 64 Bit

- Crusader Kings 2 Ireland

- Punch Quest Ios Ifile Hack

- Kaplan Usmle Question Bank Free Download

- Modern Warfare 3 Frost

- Download Lego Star Wars Force Awakens Fshare

- Spark Df, Flatten Df

- What Is A Curse Account

- The Last Days Warband

- Hack Mu Online 2018